Reliable systems are of enormous importance to all of us across all industries. Whether in medical technology, the automotive industry, aviation, rail, or even the process industry, we rely on technical systems every day. We call this "dependability." This encompasses the combination of reliable functionality, fail-safe operation, availability, and security. Especially in medical technology, people in need of assistance often already rely on the reliability of products—think of ventilators or pacemakers, for example.

Regular headlines about safety-relevant systems also teach us that these features expected by customers are by no means a given. Consider, for example:

- Boeing 737 Max accidents [1]

- Toyota self-accelerator [2]

- Medtronic Pacemaker with security vulnerabilities [3]

In addition to the associated accidents and casualties, these companies are exposed to financial risk and, not least, significant damage to their reputation. Reliability should therefore be at the forefront of their product objectives.

Basic concepts

But how do you systematically develop a reliable product? Is it sufficient to simply test a product "well"? What does "well" testing mean? A more secure system is generally defined as one that is "free of unreasonable risk." Considering the typical causes of errors in the following figure, it becomes clear that the causes of errors are far more diverse than can be covered by testing alone. It is also clear that only a fraction of typical causes of errors arise from the actual implementation.

In the following, only a few selected basic concepts that are important in medical technology are presented.

- Safety Management

Systematic planning and tracking of safety activities must be part of every development of a safety-relevant system. In addition to obvious activities such as regular review planning, potentially less intuitive aspects such as competency management or planning distributed development must also be considered. Above all, however, safety is not a feature and must be considered throughout the entire development lifecycle. The following figure shows a possible approach to approaching the solution in an abstract way: - Risk management

In medical technology, risk management is a central component of the safety lifecycle. Since risks always only impact the top system level, we recommend conducting a risk assessment at the system level and, based on this, deriving dedicated safety requirements along the safety-relevant chains of action. (Note: For highly complex and distributed systems, it may be appropriate to structure the risk assessment hierarchically.) - Safety – Culture

At first glance, promoting and maintaining a corresponding safety culture within a company may seem like a secondary issue for product development. However, one only has to look at the following examples of good and bad safety cultures to see that they can very well change product characteristics for better or worse.

The following table contains just a few examples of good and bad safety culture.Examples of poor safety culture Examples of good safety culture Schedule and cost are the ultimate goals of product development. Safety is our highest priority Processes are defined “ad hoc” or implicitly A defined, traceable, and controlled process is implemented at all levels (management, development, verification, etc.). Decisions are made by “majority” Decisions are preceded by sound technical analyses and can be made objectively Decisions are only made in meetings without sufficient preparation The goal should always be to establish a good safety culture within the company. Finding objectively existing errors must be permitted and encouraged. To achieve this, measures and mechanisms must be established to effectively detect and prevent them.

- Error prevention, error detection and error handling

Risk assessment and the systematic derivation of safety requirements lay the foundation for preventing, detecting, and handling errors at various levels of abstraction. The key word here is first-fault safety.

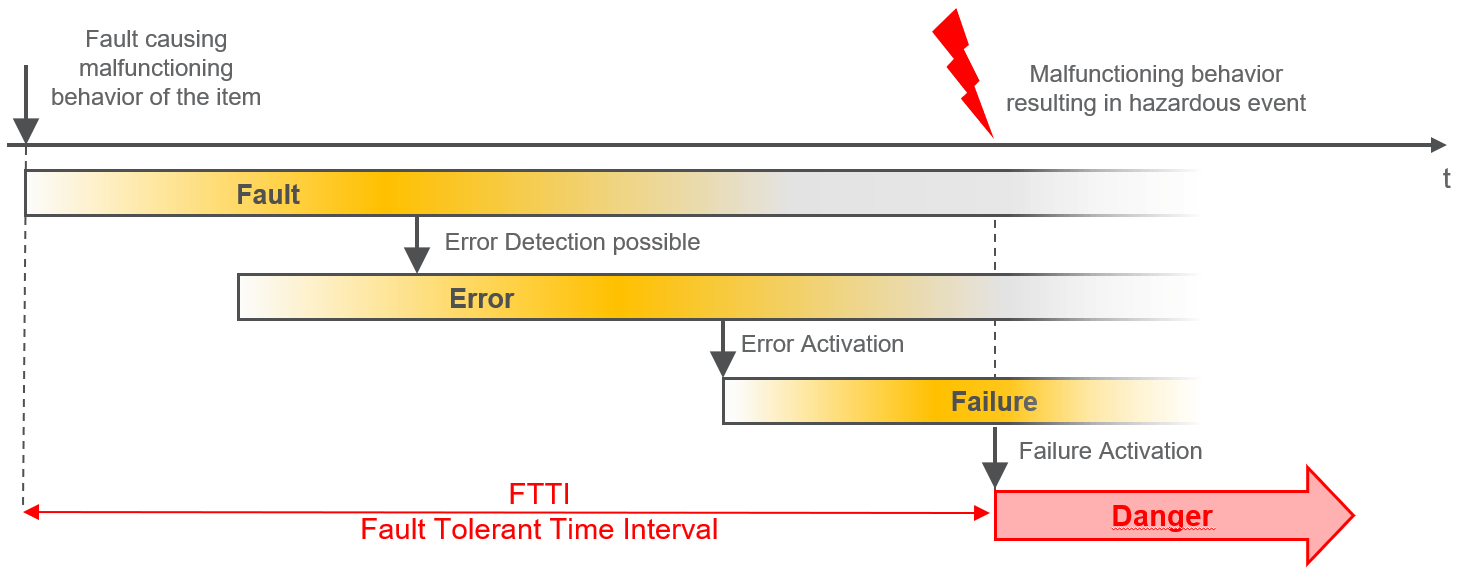

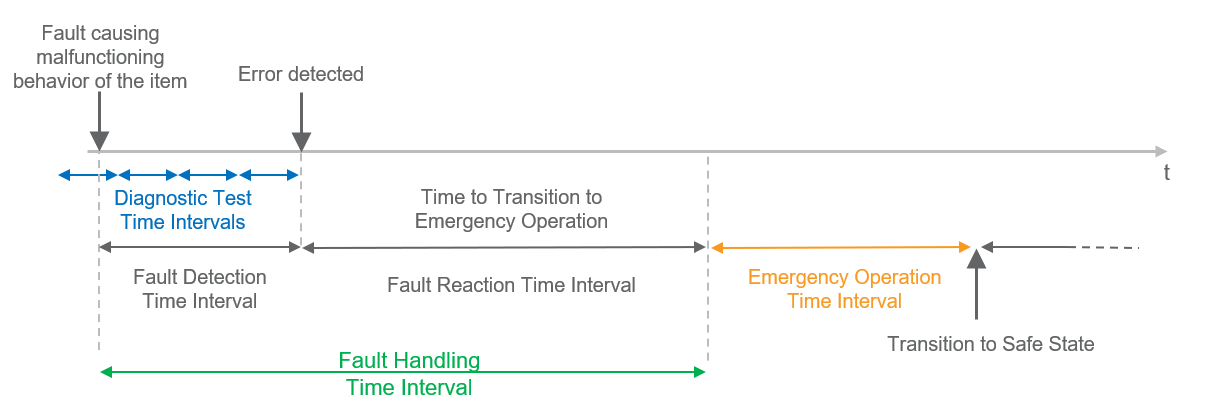

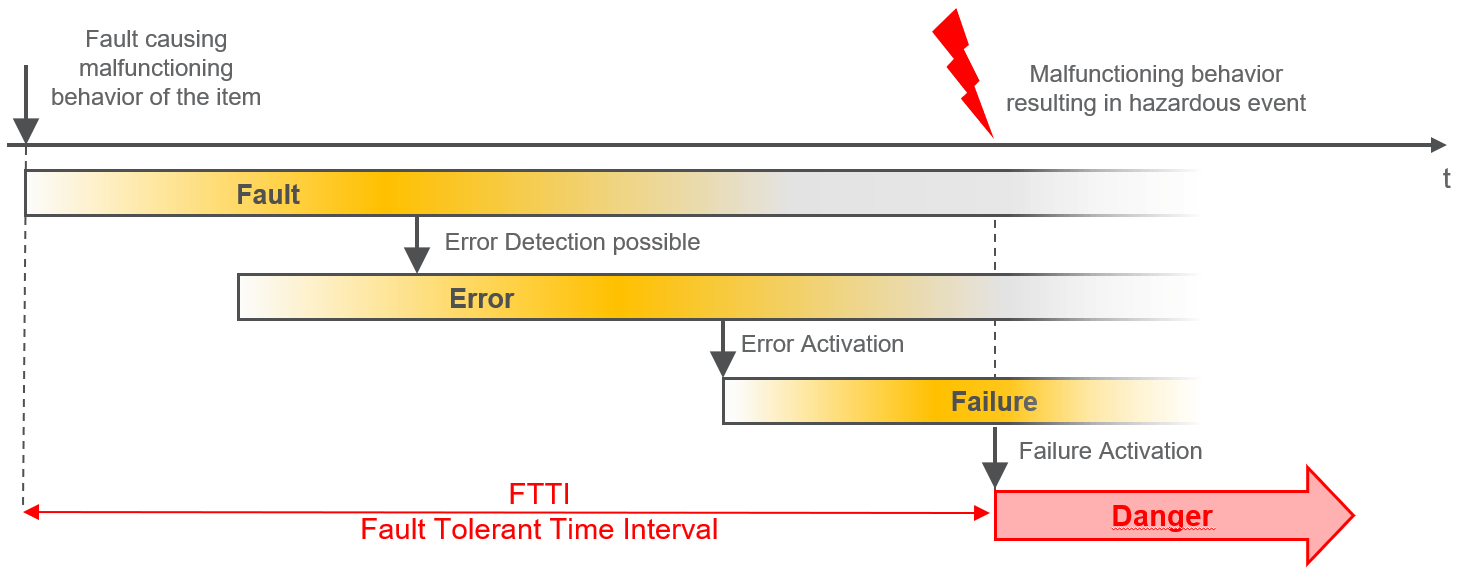

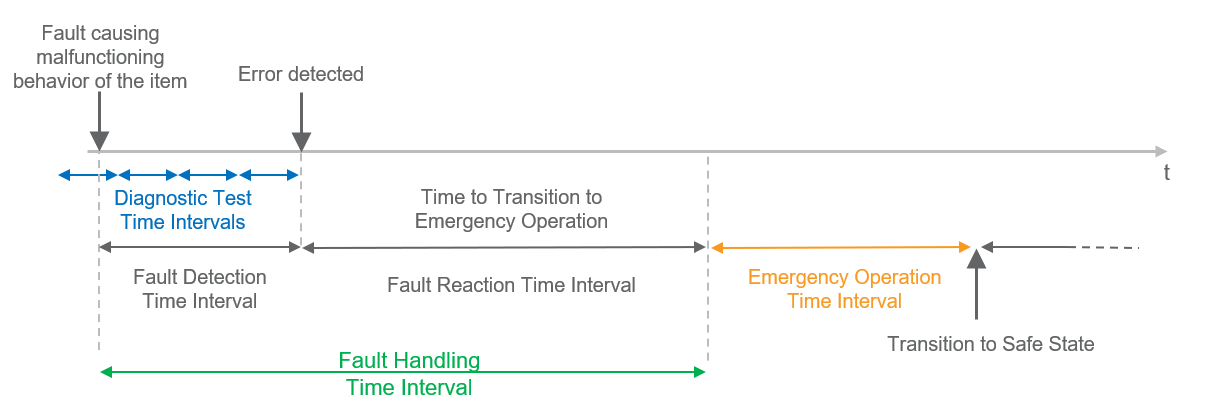

At this point, however, we will particularly consider the transitions between fault, error, and failure. The following figures illustrate these transitions, once with and once without a safety mechanism.

Figure 3: Relationship between error causes and failures (without safety mechanism)

Figure 4: Relationship between error cause and safety mechanism with emergency operation The figures clearly demonstrate at first glance that many often non-trivial dependencies along the causal chains in a system must be taken into account – safety mechanisms must be designed accordingly and "cleverly" distributed along these causal chains. Thus, it can also happen that one and the same technical mechanism has different effectiveness in different systems, as different error causes may be underlying them.

It also becomes clear that reliable systems can only be developed with the help of a good systematic approach. - Security analyses

Aspects specified and implemented during the design phase should be continuously and independently evaluated and systematically challenged using security analyses from a "different perspective." An important basis for such analyses is always architecture and design, which must include both static and dynamic aspects.

We recommend a combination of top-down (e.g. FTA) and bottom-up analyses (e.g. FME(D)A). - Traceability

Among many other characteristics, traceability is one of the most important features for requirements management. For us, however, well-practiced traceability means more: In addition to the formal aspect of traceability for requirements management, traceability should also be of fundamental importance in terms of content. Well-practiced traceability not only helps during the design phase but also offers the opportunity to develop a sound security argument.

Conclusion

The development of reliable systems is a complex topic that requires a holistic approach throughout the entire development lifecycle. Attempting to create a comprehensive security argument retrospectively after an existing development is highly risky and is not recommended. Furthermore, completed developments are usually very rigid and cannot be modified, or can hardly be modified. This makes a security argument either very complicated or only possible under considerable commercial pressure.

If you are involved in the development of safety-relevant systems, our experts from exida.com We're happy to help you with everything from gap analyses, process modeling, technical specifications, and security analyses to safety assessments. We also offer training courses, which we can conduct either in our own training facilities or in-house at your location.

Left

[1] //www.seattletimes.com/business/boeing-aerospace/failed-certification-faa-missed-safety-issues-in-the-737-max-system-implicated-in-the-lion-air-crash/

[2] //www.edn.com/design/automotive/4423428/Toyota-s-killer-firmware–Bad-design-and-its-consequences

[3] //www.medtronic.com/content/dam/medtronic-com/us-en/corporate/documents/REV-Medtronic-2090-Security-Bulletin_FNL.pdf

[4] “Out of Control: Why Control Systems go Wrong and How to Prevent Failure,” UK: Sheffield, Heath and Safety Executive, 1995